10 Ultra-Fast Signs an AI Video Is Fake - How to Spot It in 5 Seconds

|

| How to spot AI-generated videos in 5 seconds |

AI video tools are getting better fast. A fake clip that looked obvious a year ago can now fool millions on TikTok, X, Facebook, and YouTube. That is why viewers need a 5-second test: a simple set of visual checks you can use before liking, sharing, or turning a clip into “breaking news.”

This guide breaks down 10 ultra-fast signs of an AI-generated video, explained in plain English. These are not slow forensic methods. They are quick visual clues that help journalists, creators, and everyday users spot suspicious footage almost instantly.

Why the “5-second test” matters

Most viral misinformation wins because people react before they verify. AI videos are designed to exploit that habit. A dramatic explosion, a crying celebrity, a politician saying something shocking, or a “live CCTV” clip can go viral long before anyone checks whether it is real.

The good news is that even advanced AI video still struggles with consistency. In many fake clips, something breaks under close attention: the face, the hands, the text, the physics, or the timing.

If you train your eye, you can often catch a fake in seconds.

1. The mouth moves, but the words do not feel right

This is still one of the fastest tells.

In many AI videos, the lips open and close in a way that looks almost correct, but not fully human. The timing may feel slightly off. The mouth shape may not match certain sounds. Teeth may blur together. The jaw may move too smoothly, as if it is gliding instead of speaking.

A real video usually has tiny natural imperfections. An AI-generated face often looks too controlled.

5-second test: Watch the mouth only. Mute the rest of the frame in your mind. Does the speech look physically believable?

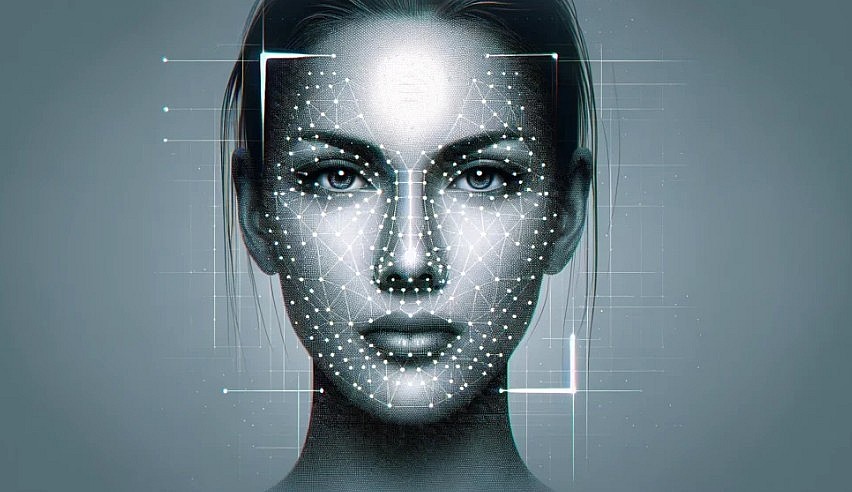

2. The eyes look “alive,” but not truly human

AI has improved with faces, but the eyes still give it away surprisingly often.

Look for a blank stare, unnatural blinking, mismatched eye direction, or reflections that do not fit the light in the room. Sometimes one eye tracks differently from the other. Sometimes blinking is too rare, too regular, or oddly timed.

Human eyes constantly make tiny adjustments. AI eyes often look polished but emotionally empty.

5-second test: Zoom in mentally on the eyes. Do they react naturally, or do they look like a mask?

Do you think the following video of an accident caused by someone being glued to their phone is AI-generated or real?

3. Hands are still a major warning sign

If a person in the video points, waves, grips a microphone, or holds a phone, check the hands immediately.

AI often creates fingers that are too long, fused, bent in impossible ways, or inconsistent from one moment to the next. Even when the number of fingers is correct, the motion can feel rubbery or boneless.

This remains one of the easiest shortcuts in visual verification.

5-second test: The moment hands appear, pause on them. If the hand anatomy feels wrong, the whole clip is suspect.

4. Text in the background looks strange

AI video tools still struggle with readable text. Street signs, shop names, subtitles, license plates, uniforms, posters, and labels can become warped, misspelled, or unstable between frames.

That matters because real cameras usually capture text consistently, even in low quality. AI often produces letters that look almost right from far away but collapse when you focus on them.

5-second test: Find one piece of text in frame. If it is gibberish, melting, or changing shape, be careful.

5. Hair, ears, and glasses look unstable

Most people check the face first, but the best clues are often at the edges.

Hairlines may shimmer. Earrings may disappear. Glasses may bend or merge into the face. Ears can shift shape between frames. These are classic “boundary errors,” where AI struggles to keep small details stable during motion.

A real video can be blurry. But blur is different from structural instability.

5-second test: Ignore the center of the face. Look at the edges: hair, ears, frames, jewelry.

6. The background behaves like a dream

Sometimes the person looks convincing, but the room behind them does not.

Chairs may warp. Walls may breathe. Lamps may change shape. Cars may slide oddly. Buildings in the distance may bend when the camera moves. AI often spends most of its computing power on the main subject, leaving the background less stable.

This is especially common in fast-moving clips, dramatic news-style videos, and fake surveillance footage.

5-second test: Stop looking at the person. Watch the background for two seconds. Does anything drift, stretch, or morph?

7. Physics feel wrong

AI video can imitate motion, but real-world physics is still hard to fake perfectly.

Smoke may move unnaturally. Fire may look decorative rather than destructive. Falling objects may lack weight. Running people may seem to glide. Shadows may not match the movement. Reflections may lag or disappear.

You do not need to be a scientist to notice when something “feels off.” That instinct matters.

5-second test: Ask one question: Does this move like real life, or like a visual effect?

8. The camera movement feels impossible

Many fake videos try to look cinematic or “live,” but the camera motion can betray them.

You may see overly smooth push-ins, floating handheld shots, impossible angle changes, or sudden perspective shifts that do not fit a real phone, drone, CCTV camera, or tripod. Some AI clips mimic security footage but still move like a movie camera.

Real cameras have physical limits. AI cameras often do not.

5-second test: Ask whether a real human, phone, CCTV unit, or dashcam could have captured that exact motion.

9. Emotion and body language do not match

A person may look scared, angry, or excited in an AI clip, but the body language often feels disconnected. The face may show emotion while the shoulders remain dead still. A speaker may sound urgent while the timing of gestures feels delayed. Crowds may react too uniformly.

Real human expression is messy and layered. AI often imitates the headline emotion but misses the subtle coordination of body, voice, and timing.

5-second test: Does the whole body support the emotion, or only the face?

10. The clip is engineered to stop you from checking

This is the final and most overlooked sign.

Many AI videos are short for a reason. They rely on speed, shock, and low scrutiny. They may be five to eight seconds long, cropped tightly, heavily compressed, reposted without source, or covered with dramatic captions. That does not prove they are fake, but it is a major warning pattern.

The shorter and more sensational the clip, the more careful you should be.

5-second test: If the video is ultra-short, ultra-dramatic, and source-free, treat it as unverified.

The fastest checklist: a 5-second AI video test

When a suspicious clip appears, run this mental sequence:

Face. Eyes. Hands. Text. Background.

If even one of those looks seriously wrong, do not trust the video yet. If two or three look wrong, assume it needs verification before sharing.

What to do after the 5-second test

A quick visual scan is only step one. For high-stakes topics such as war, politics, disasters, crime, or celebrity scandals, do more:

Check who posted it first.

Look for longer versions of the clip.

Compare it with reports from credible news outlets.

Search for matching landmarks, weather, shadows, and timestamps.

See whether trusted fact-checkers or forensic analysts have reviewed it.

The rule is simple: viral does not mean verified.

Final takeaway

The best defense against fake AI video is not a complicated software tool. It is a trained habit.

In just five seconds, you can often spot the biggest red flags: bad lip-sync, empty eyes, warped hands, broken text, unstable backgrounds, strange physics, impossible camera motion, mismatched body language, and suspiciously short viral framing.

AI video is improving, but so can your eye.

The next time a shocking clip appears on your feed, do not ask only, “Is this dramatic?” Ask, “Does this behave like reality?”